Can you please start using crossposting rather than posting the same thing in 20 different communities? It’s super annoying to scroll past the same articles over and over and you are doing it with all content you post

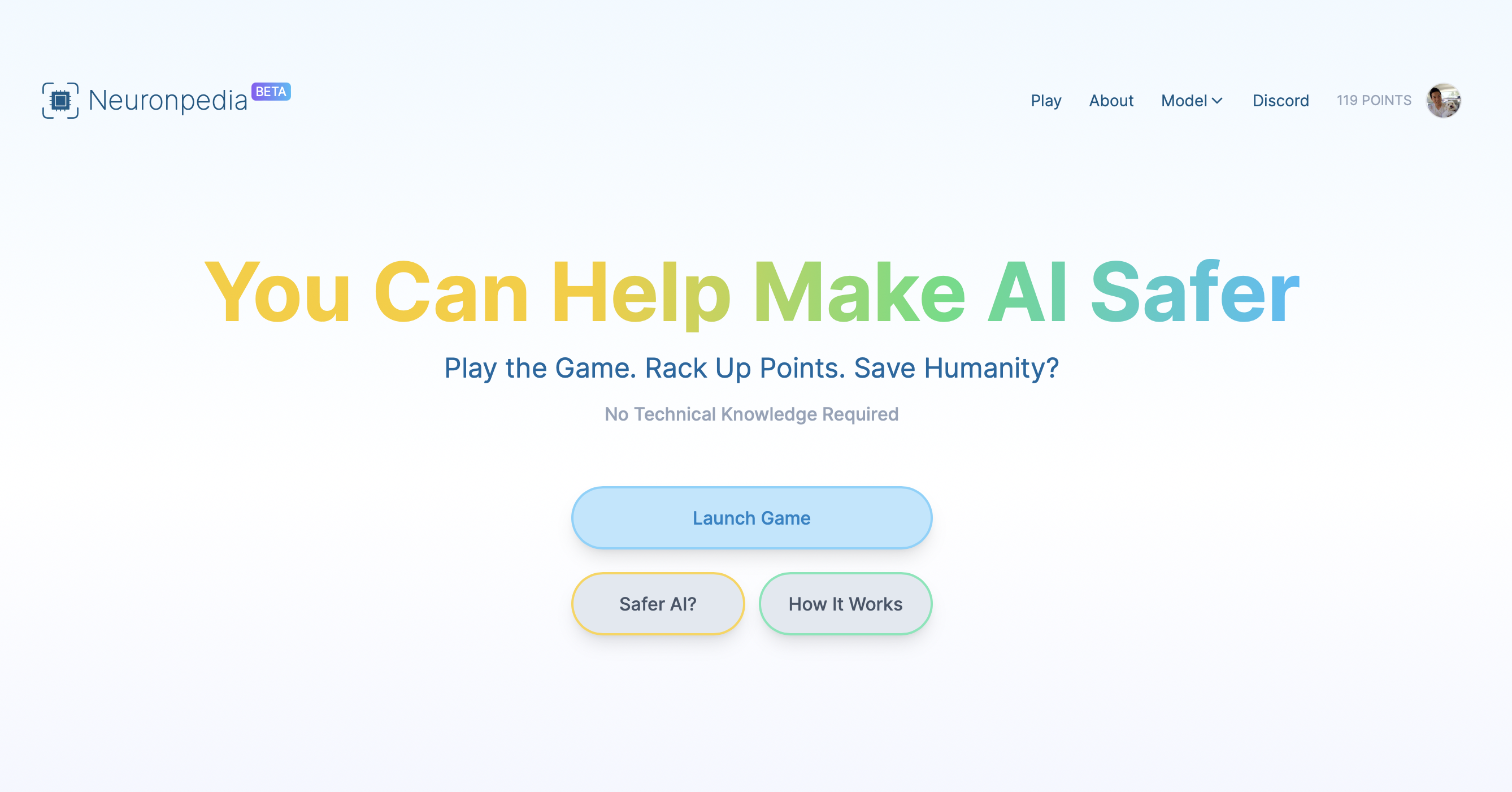

“I started working on Neuronpedia three weeks ago, and I’m posting on LessWrong to develop an initial community and for feedback and testing. I’m not posting it anywhere else, please do not share it yet in other forums like Reddit”

Also apparently the creator had problems with hitting API rate limits. This is definitely still in early testing so unless you are the creator it might be wise to refrain from advertising it too much.

Any explainers? How does building a concept association network make AI safer?

I think the idea is that there are potentially alignment issues in LLMs because it’s not clear what concepts map to what activations. That makes it difficult to see what they’re really “thinking” about when they generate text. Eg. if they’re being misleading or are incorrectly associating concepts that shouldn’t be connected etc.

The idea here is to use some mechanistic interpretability stuff to see what text activates what neurons in an LLM and then crowd source the meanings behind that and see if that’s something you could use to look up some context from an ai. Sort of trying to make a “Wikipedia of AI mind reading”

Dunno how practical it is or how effective that approach is but it’s an interesting idea.