Background: 15 years of experience in software and apparently spoiled because it was already set up correctly.

Been practicing doing my own servers, published a test site and 24 hours later, root was compromised.

Rolled back to the backup before I made it public and now I have a security checklist.

Basic setup for me is scripted on a new system. In regards to ssh, I make sure:

- Root account is disabled, sudo only

- ssh only by keys

- sshd blocks all users but a few, via AllowUsers

- All ‘default usernames’ are removed, like ec2-user or ubuntu for AWS ec2 systems

- The default ssh port moved if ssh has to be exposed to the Internet. No, this doesn’t make it “more secure” but damn, it reduces the script denials in my system logs, fight me.

- Services are only allowed connections by an allow list of IPs or subnets. Internal, when possible.

My systems are not “unhackable” but not low-hanging fruit, either. I assume everything I have out there can be hacked by someone SUPER determined, and have a vector of protection to mitigate backwash in case they gain full access.

- The default ssh port moved if ssh has to be exposed to the Internet. No, this doesn’t make it “more secure” but damn, it reduces the script denials in my system logs, fight me.

Gosh I get unreasonably frustrated when someone says yeah but that’s just security through obscurity. Like yeah, we all know what nmap is, a persistent threat will just look at all 65535 and figure out where ssh is listening… But if you change your threat model and talk about bots? Logs are much cleaner and moving ports gets rid of a lot of traffic. Obviously so does enabling keys only.

Also does anyone still port knock these days?

Also does anyone still port knock these days?

Enter Masscan, probably a net negative for the internet, so use with care.

I didn’t see anything about port knocking there, it rather looks like it has the opposite focus - a quote from that page is “features that support widespread scanning of many machines are supported, while in-depth scanning of single machines aren’t.”

Sure yeah it’s a discovery tool OOTB, but I’ve used it to perform specific packet sequences as well.

Literally the only time I got somewhat hacked was when I left the default port of the service. Obscurity is reasonable, combined with other things like the ones mentioned here make you pretty much invulnerable to casuals. Somebody needs to target you to get anything.

I use port knock. Really helps against scans if you are the edge device.

Also does anyone still port knock these days?

If they did, would we know?

One time, I didn’t realize I had allowed all users to log in via ssh, and I had a user “steam” whose password was just “steam”.

“Hey, why is this Valheim server running like shit?”

“Wtf is

xrx?”“Oh, it looks like it’s mining crypto. Cool. Welp, gotta nuke this whole box now.”

So anyway, now I use NixOS.

Good point about a default deny approach to users and ssh, so random services don’t add insecure logins.

Interesting. Do you know how it got compromised?

I published it to the internet and the next day, I couldn’t ssh into the server anymore with my user account and something was off.

Tried root + password, also failed.

Immediately facepalmed because the password was the generic 8 characters and there was no fail2ban to stop guessing.

deleted by creator

More importantly, don’t open up SSH to public access. Use a VPN connection to the server. This is really easy to do with Netbird, Tailscale, etc. You should only ever be able to connect to SSH privately, never over the public net.

It’s perfectly safe to run SSH on port 22 towards the open Internet with public key authentication only.

https://nvd.nist.gov/vuln/detail/cve-2024-6409 RCE as root without authentication via Open SSH. If they’ve got a connection, that’s more than nothing and sometimes it’s enough.

That attack vector is exactly the same towards a VPN.

A VPN like Wireguard can run over UDP on a random port which is nearly impossible to discover for an attacker. Unlike sshd, it won’t even show up in a portscan.

This was a specific design goal of Wireguard by the way (see “5.1 Silence is a virtue” here https://www.wireguard.com/papers/wireguard.pdf)

It also acts as a catch-all for all your services, so instead of worrying about the security of all the different sshds or other services you may have exposed, you just have to keep your vpn up to date.

Are you talking a VPN running on the same box as the service? UDP VPN would help as another mentioned, but doesn’t really add isolation.

If your vpn box is standalone, then getting root is bad but just step one. They have to own the VPN to be able to even do more recon then try SSH.

Defense in depth. They didn’t immediately get server root and application access in one step. Now they have to connect to a patched, cert only, etc SSH server. Just looking for it could trip into some honeypot. They had to find the VPN host as well which wasn’t the same as the box they were targeting. That would shut down 99% of the automated/script kiddie shit finding the main service then scanning that IP.

You can’t argue that one step to own the system is more secure than two separate pieces of updated software on separate boxes.

what’s netbird

https://netbird.io. Wireguard based software defined networking, very similar to Tailscale.

Tailscale? Netbird? I have been using hamachi like a fucking neanderthal. I love this posts, I learn so much

wow crazy that this was the default setup. It should really force you to either disable root or set a proper password (or warn you)

deleted by creator

Which ones? I’m asking because that isn’t true for cent, rocky, arch.

Mostly Ubuntu. And… I think it’s just Ubuntu.

Fedora (immutable at least) has it disabled by default I think, but it’s just one checkbox away in one of the setup menus.

Standard Fedora does as well

Ah fair enough, I know that’s the basis of a ton of distros. I lean towards RHEL so I’m not super fluent there.

deleted by creator

Yeah I was confused about the comment chain. I was thinking terminal login vs ssh. You’re right in my experience…root ssh requires user intervention for RHEL and friends and arch and debian.

Side note: did you mean to say “shot themselves in the root”? I love it either way.

Id consectetur dolore eiusmod culpa.

Many cloud providers (the cheap ones in particular) will put patches on top of the base distro, so sometimes root always gets a password. Even for Ubuntu.

There are ways around this, like proper cloud-init support, but not exactly beginner friendly.

deleted by creator

Rocky asks during setup, I assume centOS too

Love Hetzner. You just give them your public key and they boot you into a rescue system from which you can install what you want how you want.

I think their auction servers are a hidden gem. I mean the prices used to be better. Now they have some kind of systrem that resets them when they get too low. But the prices are still pretty good I think. But a year or two ago I got a pretty good deal on two decently spec’d servers.

People are scared off by the fact you just get their rescue prompt on auctions boxes… Except their rescue prompt has a guided imaging setup tool to install pretty much every popular distro with configurable raid options etc.

Yeah, I basically jump from auction system to auction system every other year or so and either get a cheaper or more powerful server or both.

I monitor for good deals. Because there’s no contract it’s easy to add one, move stuff over at your leisure and kill the old one off. It’s the better way to do it for semi serious stuff.

Now that you mentioned it, it didn’t! I recall even docker Linux setups would yell at me.

because the password was the generic 8 characters and there was no fail2ban to stop guessing

Oof yea that’ll do it, your usually fine as long as you hardened enough to at least ward off the script kiddies. The people with actual real skill tend to go after…juicer targets lmao

Haha I’m pretty sure my little server was just part of the “let’s test our dumb script to see if it works. Oh wow it did what a moron!”

Lessons learned.

Which distro allows root to login via SSH?

All of them if you configure it?

Not very many. None of the enterprise ones, at least.

Ah, timeless classic.

I ran a standard raspian ssh server on my home network for several years, default user was removed and my own user was in it’s place, root was configured as standard on a raspbian, my account had a complex but fairly short password, no specific keys set.

I saw constant attacks but to my knowledge, it was never breached.

I removed it when I realized that my ISP might take a dim view of running a server on their home client net that they didn’t know about, especially since it showed up on Shodan…

Don’t do what I did, secure your systems properly!

But it was kinda cool to be able to SSH from Thailand back home to Sweden and browse my NAS, it was super slow, but damn cool…

Why would a Swedish ISP care? I’ve run servers from home since I first connected up in … 1996. I’ve had a lot of different ISPs during that time, although nowadays I always choose Bahnhof because of them fighting the good fights.

They probably don’t, unless I got compromised and bad traffic came from their network, but I was paranoid, and wanted to avoid the possibility.

But it was kinda cool to be able to SSH from Thailand back home to Sweden and browse my NAS, it was super slow, but damn cool…

That feels like sorcery, doesn’t it? You can still do this WAY safer by using Wireguard or something a little easier like Tailscale. I use Tailscale myself to VPN to my NAS.

I get a kick out of showing people my NextCloud Memories albums or Jellyfin videos from my phone and saying “This is talking to the box in my house right now! Isn’t that cool!?” Hahaha.

I’m almost glad I had to go that route. Most of our ISPs here in the U.S will block outgoing ports by default, so they can

keep the network safesell you a home business plan lol.

Lol ssh has no reason to be port exposed in 99% of home server setups.

VPNs are extremely easy, free, and wireguard is very performant with openvpn also fine for ssh. I have yet to see any usecase for simply port forwarding ssh in a home setup. Even a public git server can be tunneled through https.

Yeah I’m honest with myself that I’m a security newb and don’t know how to even know what I’m vulnerable to yet. So I didn’t bother opening anything at all on my router. That sounded way too scary.

Tailscale really is magic. I just use Cloudflare to forward a domain I own, and I can get to my services, my NextCloud, everything, from anywhere, and I’m reasonably confident I’m not exposing any doors to the innumerable botnet swarms.

It might be a tiny bit inconvenient if I wanted to serve anything to anyone not in my Tailnet or already on my home LAN (like sending al someone a link to a NextCloud folder for instance.), but at this point, that’s quite the edge case.

I learned to set up NGINX proxy manager for a reverse proxy though, and that’s pretty great! I still harden stuff where I can as I learn, even though I’m confident nobody’s even seeing it.

Honestly, crowdsec with the nginx bouncer is all you need security-wise to start experimenting. It isn’t perfect security, but it is way more comprehensive than fail2ban for just getting started and figuring more out later.

Here is my traefik-based crowdsec docker composer:

services: crowdsec: image: crowdsecurity/crowdsec:latest container_name: crowdsec environment: GID: $PGID volumes: - $USERDIR/dockerconfig/crowdsec/acquis.yaml:/etc/crowdsec/acquis.yaml - $USERDIR/data/Volumes/crowdsec:/var/lib/crowdsec/data/ - $USERDIR/dockerconfig/crowdsec:/etc/crowdsec/ - $DOCKERDIR/traefik2/traefik.log:/var/log/traefik/traefik.log:ro networks: - web restart: unless-stopped bouncer-traefik: image: docker.io/fbonalair/traefik-crowdsec-bouncer:latest container_name: bouncer-traefik environment: CROWDSEC_BOUNCER_API_KEY: $CROWDSEC_API CROWDSEC_AGENT_HOST: crowdsec:8080 networks: - web # same network as traefik + crowdsec depends_on: - crowdsec restart: unless-stopped networks: web: external: truehttps://github.com/imthenachoman/How-To-Secure-A-Linux-Server this is a more in-depth crash course for system-level security but hasn’t been updated in a while.

That’s rad! Thanks so much for sharing that! Definitely gonna give this a read. Very much appreciated. :)

Any idea what ip addresses were used to compromise it?

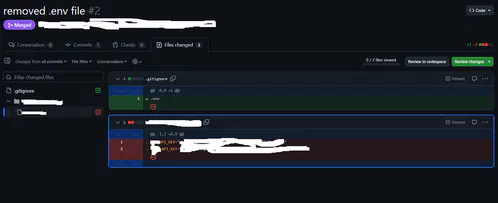

Lol you can actually demo a github compromise in real time to an audience.

Make a repo with an API key, publish it, and literally just watch as it takes only a few minutes before a script logs in.

I search commits for “removed env file” to hopefully catch people who don’t know how git works.

–verbose please?

edit: never mind, found it. So there’s dumbasses storing sensitive data (keys!) inside their git folder and unable to configure .gitignore…

yeah, I just tried it there, people actually did it.

yeah, I just tried it there, people actually did it.I always start with .gitignore and adding the .env then making it.

Anywho, there’s git filter-repo which is quite nice and retconned some of my repos for some minor things out of existence :P

I searched for “added gitignore” and I found an etherum wallet with 25 cent.

My work is transferring to github from svn currently

My condolences

You gremlin lmao

I’ve gotta say this post made me appreciate switching to lemmy. This post is actually helpful for the poor sap that didn’t know better, instead of pure salt like another site I won’t mention.

I shared it because, out there, there is a junior engineer experiencing severe imposter syndrome. And here I am, someone who has successfully delivered applications with millions of users and advanced to leadership roles within the tech industry, who overlook basic security principles.

We all make mistakes!

There’s a 40 year I.T. veteran here that still suffers imposter syndrome. It’s a real thing I’ve never been able to shake off

Just look at who is in the White House, mate - and not just the president, but basically you can pick anyone he’s hand-picked for his staff.

Surely that’s an instant cure for any qualified person feeling imposter syndrome in their job.

Do not allow username/password login for ssh. Force certificate authentication only!

If it’s public facing, how about dont turn on ssh to the public, open it to select ips or ranges. Use a non standard port, use a cert or even a radius with TOTP like privacyIdea. How about a port knocker to open the non standard port as well. Autoban to lock out source ips.

That’s just off the top of my head.

There’s a lot you can do to harden a host.

dont turn on ssh to the public, open it to select ips or ranges

What if you don’t have a static IP, do you ask your ISP in what range their public addresses fall?

Sure. My ISP gave me this range for this exact reason.

Do not allow username/password login for ssh

This is disabled by default for the root user.

$ man sshd_config ... PermitRootLogin Specifies whether root can log in using ssh(1). The argument must be yes, prohibit-password, forced-commands-only, or no. The default is prohibit-password. ...Why though? If u have a strong password, it will take eternity to brute force

How are people’s servers getting compromised? I’m no security expert (I’ve never worked in tech at all) and have a public VPS, never been compromised. Mainly just use SSH keys not passwords, I don’t do anything too crazy. Like if you have open SSH on port 22 with root login enabled and your root password is

password123then maybe but I’m surprised I’ve never been pwned if it’s so easy to get got…By allowing password login and using weak passwords or by reusing passwords that have been involved in a data breach somewhere.

That makes sense. It feels a bit mad that the difference between getting pwned super easy vs not is something simple like that. But also reassuring to know, cause I was wondering how I heard about so many hobbyist home labs etc getting compromised when it’d be pretty hard to obtain a reasonably secured private key (ie not uploaded onto the cloud or anything, not stored on an unencrypted drive that other people can easily access, etc). But if it’s just password logins that makes more sense.

glad my root pass is

toorand not something as obvious aspassword123toor, like Tor, the leet hacker software. So it must be super secure.

The one db I saw compromised at a previous employer was an AWS RDS with public Internet access open and default admin username/password. Luckily it was just full of test data, so when we noticed its contents had been replaced with a ransom message we just deleted the instance.

That’s incredible, I’ve got the same combination on my luggage.

Looking at ops other comment, weak password and no fail2ban

As a linux n00b who just recently took the plunge and set up a public site (tho really just for my own / selfhosting),

Can anyone recommend a good guide or starting place for how to harden the setup? Im running mint on my former gaming rig, site is set up LAMP

The other poster gave you a lot. If that’s too much at once, the really low hanging fruit you want to start with is:

-

Choose an active, secure distro. There’s a lot of flavors of Linux out there and they can be fun to try but if you’re putting something up publicly it should be running on one that’s well maintained and known for security. CentOS and Debian are excellent easy choices for example.

-

Similarly, pick well maintained software with a track record. Nginx and Apache have been around forever and have excellent track records, for example, both for being secure and fixing flaws quickly.

-

If you use Docker, once again keep an eye out for things that are actively maintained. If you decide to use Nginx, there will be five million containers to choose from. DockerHub gives you the tools to make this determination: Download number is a decent proxy for “how many people are using this” and the list of updates tells you how often and how recently it’s being updated.

-

Finally, definitely do look at the other poster’s notes about SSH. 5 seconds after you put up an SSH server, you’ll be getting hit with rogue login attempts.

-

Definitely get a password manager, and it’s not just one password per server but one password per service. Your login password to the computer is different from your login to any other things your server is running.

The rest requires research, but these steps will protect you from the most common threats pretty effectively. The world is full of bots poking at every service they can find, so keeping them out is crucial. You won’t be protected from a dedicated, knowledgeable attacker until you do the rest of what the other poster said, and then some, so try not to make too many enemies.

The TLDR is here : https://www.digitalocean.com/community/tutorials/recommended-security-measures-to-protect-your-servers

You won’t be protected from a dedicated, knowledgeable attacker until you do the rest of what the other poster said, and then some,

You’re right I didn’t even get to ACME and PKI or TOTP

https://letsencrypt.org/getting-started/

https://openbao.org/docs/secrets/pki/

https://openbao.org/docs/secrets/totp/

And for bonus points build your own certificate authority to sign it all.

https://smallstep.com/blog/build-a-tiny-ca-with-raspberry-pi-yubikey/

Thank you for this! I’ve got some homework to do!

Good luck on your journey.

I would suggest having two servers one to test and one to expose to the Internet. That way if you make a mistake hopefully you’ll find it before you expose it to the Internet.

Thank you!

-

Paranoid external security. I’m assuming you already have a domain name. I’m also assuming you have some ICANN anonymization setup.

This is your local reverse Proxy. You can manage all this with a container called nginx proxy manager, but it could benefit you to know it’s inner workings first. https://www.howtogeek.com/devops/what-is-a-reverse-proxy-and-how-does-it-work/

https://cloud9sc.com/nginx-proxy-manager-hardening/

https://github.com/NginxProxyManager/nginx-proxy-manager

Next you’ll want to proxy your IP address as you don’t want that pointing to your home address

https://developers.cloudflare.com/learning-paths/get-started-free/onboarding/proxy-dns-records/

Remote access is next. I would suggest setting up wireguard on a machine that’s not your webserver, but you can also set that up in a container as well. Either way you’ll need to punch another hole in your router to point to your wire guard bastion host on your local network. It has many clients for windows and linux and android and IOS

https://github.com/angristan/wireguard-install

https://www.wireguard.com/quickstart/

https://github.com/linuxserver/docker-wireguard

Now internally, I’m assuming you’re using Linux. In that case I’d suggest securing your ssh on all machines that you log into. On the machines you’re running you should also install fail2ban, UFW, git, and some monitoring if you have the overhead but the monitoring part is outside of the purview of this comment. If you’re using UFW your very first command should be

sudo ufw allow sshhttps://www.howtogeek.com/443156/the-best-ways-to-secure-your-ssh-server/

https://github.com/fail2ban/fail2ban

https://www.digitalocean.com/community/tutorials/ufw-essentials-common-firewall-rules-and-commands

Now for securing internal linux harden the kernel and remove root user. If you do this you should have a password manager setup. keepassx or bitwarden are ones I like. If those suck I’m sure someone will suggest something better. The password manager will have the root password for all of your Linux machines and they should be different passwords.

https://www.makeuseof.com/ways-improve-linux-user-account-security/

https://bitwarden.com/help/self-host-an-organization/

Finally you can harden the kernel

https://codezup.com/the-ultimate-guide-to-hardening-your-linux-system-with-kernel-parameters/

TLDR: it takes research but a good place to start is here

Correct, horse battery staple.

I couldn’t justify putting correct in my username on Lemmy. But I loved the reference too much not to use it, so here I am, a less secure truncated version of a better password.

I’m confused. I never disable root user and never got hacked.

Is the issue that the app is coded in a shitty way maybe ?

You can’t really disable the root user. You can make it so they can’t login remotely, which is highly suggested.

sudo passwd -l rootThis disables the root user

There’s no real advantage to disable the root user, and I really don’t recommend it. You can disable SSH root login, and as long as you ensure root has a secure password that’s different than your own account your system is just as safe with the added advantage of having the root account incase something happens.

That wouldn’t be defense in depth. You want to limit anything that’s not necessary as it can become a source of attack. There is no reason root should be enabled.

I don’t understand. You will still need to do administrative tasks once in a while so it isn’t really unnecessary, and if root can’t be logged in, that will mean you will have to use sudo instead, which could be an attack vector just as su.

Why do like, houses have doors man. You gotta eliminate all points of egress for security, maaaan. /s

There’s no particular reason to disable root, and with a hardened system, it’s not even a problem you need to worry about…

Another thing you can do under certain circumstances which I’m sure someone on here will point out is depreciated is use TCP Wrappers. If you are only connecting to ssh from known IP addresses or IP address ranges then you can effectively block the rest of the world from accessing you. I used a combination of ipset list, fail2ban and tcp wrappers along with my firewall which like is also something old called iptables-persistent. I’ve also moved my ssh port up high and created several other fake ports that keep anyone port scanning my IP guessing.

These days I have all ports closed except for my wireguard port and access all of my hosted services through it.

You can’t really disable it anyway.

Hardening is mostly prevent root login from outside in case every other layer of authentication and access control broke, do not allow regular user to su/sudo into it for free, and have a tight grip on anything that’s executable and have a setuid bit set. I did not install a system from scratch in a long time but I believe this would be the default on most things that are not geared toward end-user devices, too.

I can’t even figure out how to expose my services to the internet, honestly it’s probably for the best Wireguard gets the job done in the end.

I’m interested, how do you expose your services (on your PC I assume) to the internet through wireguard? Is it theough some VPN?

Wireguard IS a VPN. He has somehow through his challenges of exposing services to the internet, exposed wireguard from his home to the internet for him to connect to. Then he can connect to his internal services from there.

It’s honestly the best option and how I operate as well. I only have a handful of items exposed and even those flow through a DMZ proxy before hitting their destination servers.

Oh, I thought it was a protocol for virtual networks, that merely VPNs used. The more you know!

Edit: spelled out VPN 😅

deleted by creator

VPN’s are neat, besides the fact they’re capable of masking your IP & DNS they’re also capable of providing resources to devices outside a network.

A good example is the server at my work is only accessible on my works network, to access the server remotely without exposing it directly to the internet would be to use a VPN tunnel.

Just a note, while wireguard makes its own VPN tunnel, it differs slightly in that it isn’t the typical VPN server with vpn connecting clients, it is more akin to a peer network. Each peer device gets it’s own “server” and “client” config section in the setup file. And you share the public keys between each peer before hand, and set the IPs to use.

You should turn off ssh password logins on external facing servers at a minimum. Only use ssh keys, install fail2ban, disable ssh root logins, and make sure you have a firewall limiting ports to ssh and https.

This will catch most scripted login attempts.

If you want something more advanced, look into https://en.m.wikipedia.org/wiki/Security_Technical_Implementation_Guide and try to find an ansible playbook to apply them.

Just turn off password logins from anything but console. For all users. No matter where it runs.

It becomes second to nature pretty fast, but you should have a system for storing / rotating keys.

How do I whitelist password logins? I only disabled password logins in SSHd and set it to only use a key.

I also like to disable root login by setting its default shell to nologin, that way, it’s only accessible via sudo or doas. I think there’s a better way of doing it, which is how Debian does it by default when not setting a root password, but I’m not sure how to configure that manually, or even what they do.

Right - so console/tty login is restricted by pam and its settings. So disabling ssh root logins means you can still log in as root there.

To lock root you can use passwd -l

If locking root I would keep root shell so i could sudo to root.

So my normal setup would be to create my admin user with sudo rights, set «PasswordAuthentication no» in sshd config and lock root with «sudo passwd -l root» Remember to add a pubkey to admin users authorizedkeys, and give it a secure but typable password

My root is now only available through sudo, and i can use password on console. Instead of locking root you can give it secure typable password. This way root can log in from console so you dont need sudo for root access from console.

It boils down to what you like and what risks you take compared to usable system. You can always recover a locked root account if you have access to single-user-mode or a live cd . Disk encryption makes livecd a difficult option.

To whitelist password logins in ssh you can match username and give them yes after you set no (for all). But i see no reason for password logons in ssh, console is safe enough (for me).

Did all that, minus the no ssh root login (only key, obviously) plus one failed attempt, fail2ban permaban.

Have not had any issues, ever

And this is why every time a developer asks me for shell access to any of the deployment servers, I flat out deny the request.

Good on you for learning from your mistakes, but a perfect example for why I only let sysadmins into the systems.

You’re not wrong! Devops made me lazy

Please examine where devops allowed non-system people to be the last word on altering systems. This is a risk that needs block-letter indemnification or correction.

It’s not that devops made ya lazy. I’ve been doing devops since before they coined the term, and it’s a constant effort to remind people that it doesn’t magically make things safe, but keeping it safe is still the way.

Ah not to discount devops, I mean that in a good way.

Devops made me lazy in that for the past decade, I focus on just everything inside the code base.

I literally push code into a magic black box that then triggers a rube goldberg of events. Servers get instanced. Configs just get magically set up. It’s beautiful. Just years of smart people who make it so easy that I never have to think about it.

Since I can’t pay my devops team to come to my house, I get to figure it all out!

deleted by creator

This sounds like something everyone should go through at least once, to underscore the importance of hardening that can be easily taken for granted

Permitting inbound SSH attempts, but disallowing actual logins, is an effective strategy to identify compromised hosts in real-time.

The origin address of any login attempt is betraying it shouldn’t be trusted, and be fed into tarpits and block lists.

Endlessh and fail2ban are great to setup a ssh honeypot. There even is a Prometheus exporter version for some nice stats

Just expose endlessh on your public port 22 and if needed, configure your actual ssh on a different port. But generally: avoid exposing ssh if you don’t actually need it or at least disable root login and disable password authentication completely.

https://github.com/skeeto/endlessh https://github.com/shizunge/endlessh-go https://github.com/itskenny0/fail2ban-endlessh

If it is your single purpose to create a blocklist of suspect IP addresses, I guess this could be a honeypot strategy.

If it’s to secure your own servers, you’re only playing whack-a-mole using this method. For every IP you block, ten more will pop up.

Instead of blacklisting, it’s better to whitelist the IP addresses or ranges that have a legitimate reason to connect to your server, or alternatively use someting like geoip firewall rules to limit the scope of your exposure.

Since I’ve switched to using SSH keys for all auth Ive had no problems I’m aware of. Plus I don’t need to remember a bunch of passwords.

But then I’ve had no training in this area. What do I know

I’ve recently seen login attempts using keys, found it curious…

Probably still looking for hosts that have weak Debian SSH keys that users forgot to replace. https://www.hezmatt.org/~mpalmer/blog/2024/04/09/how-i-tripped-over-the-debian-weak-keys-vuln.html

I disabled ssh on IPv4 and that reduced hacking attempts by 99%.

It’s on IPv6 port 22 with a DNS pointing to it. I can log into it remotely by hostname. Easy.

Had this years ago except it was a dumbass contractor where I worked who left a Windows server with FTP services exposed to the Internet and IIRC anonymous FTP enabled, on a Friday.

When I came in on Monday it had become a repository for warez, malware, and questionable porn. We wiped out rather than trying to recover anything.

questionable?

Yeah just like that. Ask more questions