- cross-posted to:

- [email protected]

- cross-posted to:

- [email protected]

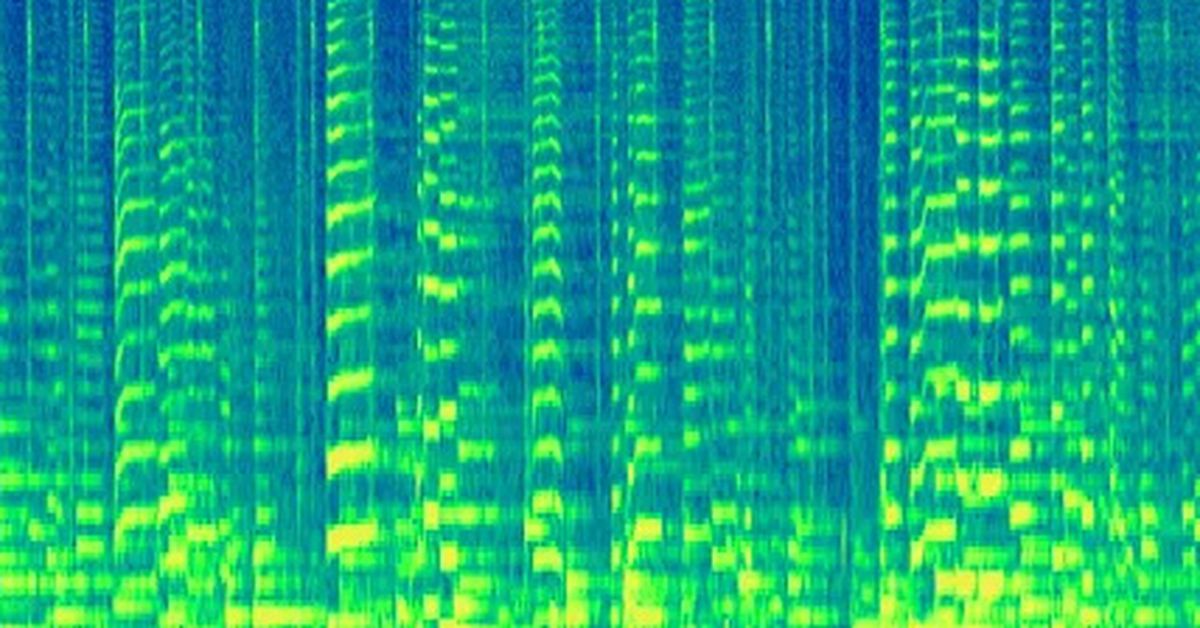

Google is embedding inaudible watermarks right into its AI generated music::Audio created using Google DeepMind’s AI Lyria model will be watermarked with SynthID to let people identify its AI-generated origins after the fact.

Sounds like a bad journalist hasn’t understood the explanation. A spectrogram contains all the same data as was originally encoded. I guess all it means is that the watermark is applied in the frequency domain.

Also this isn’t new by any stretch… Aphex Twin would like a word

Well, encoding stuff in the spectrogram isn’t new, sure. But encoding stuff into an audio file that is inaudible but robust to incidental modifications to the file is much harder. Aphex Twin’s stuff is audible!

I would like to know what it is that makes it so robust. The article explains very little. Is it in the high frequencies? Higher than the human ear can hear? Compression will effect that plus that’s going to piss dogs off. Could be something with the phasing too. Filters and effects might be able to get rid of the water mark

I don’t know what frequencies are annoying for dogs but I’m guessing it’s above 24kHz so no sound file or sound system is going to be able to store or produce it anyway.

There will certainly be some way to get rid of the watermark. But it might nevertheless persist through common filters.